In sensor development, sensitivity is often seen as a critical figure of merit—one that can open doors to new applications and opportunities. This perspective is especially common when new sensor technologies emerge from fundamental science, where the focus is often on observing novel phenomena and where the whole experimental apparatus is tailored to make this singular discovery.

However, translating a highly sensitive sensor from the lab into practical applications requires a broader perspective—one that considers not just the sensor, but the entire system it operates within. Simply swapping in a more sensitive sensor doesn’t always yield the expected gains, because the system itself must be capable of leveraging that sensitivity.

For example, magnetometers used for measuring magnetic fields need both high sensitivity and a low-noise environment to function effectively. Similarly, accelerometers used in gravity space missions must not only be highly sensitive, but also supported by models capable of distinguishing fast, tidal shifts from slower, long-term changes. Radiometers, meanwhile, require not just sensitive microwave receivers, but stable temperatures of reflectors to maintain accurate measurements.

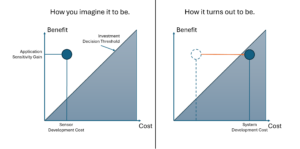

Before scaling up investment in a new sensor technology, it’s helpful to take a step back and assess whether the broader system can support and benefit from the sensitivity gain. A simple initial cost-benefit analysis—focused on identifying subsystems and components which could be sources of noise—can provide valuable insights. High-level modeling can also help clarify whether additional investments beyond sensor development might be needed to fully capitalize on the new technology.

Assuming that sensitivity gains alone will always improve a solution is what I call the Sensitivity Gain-Fallacy.

One response

[…] This is the inverse-problem version of the Sensitivity Gain-Fallacy. […]